Here are some important notices about the classification quality of B1 model currently deployed at (birdsong.report)

I made per class classification quality analysis (don’t know why I did not do it before!) and the results are both impressive and distressing.

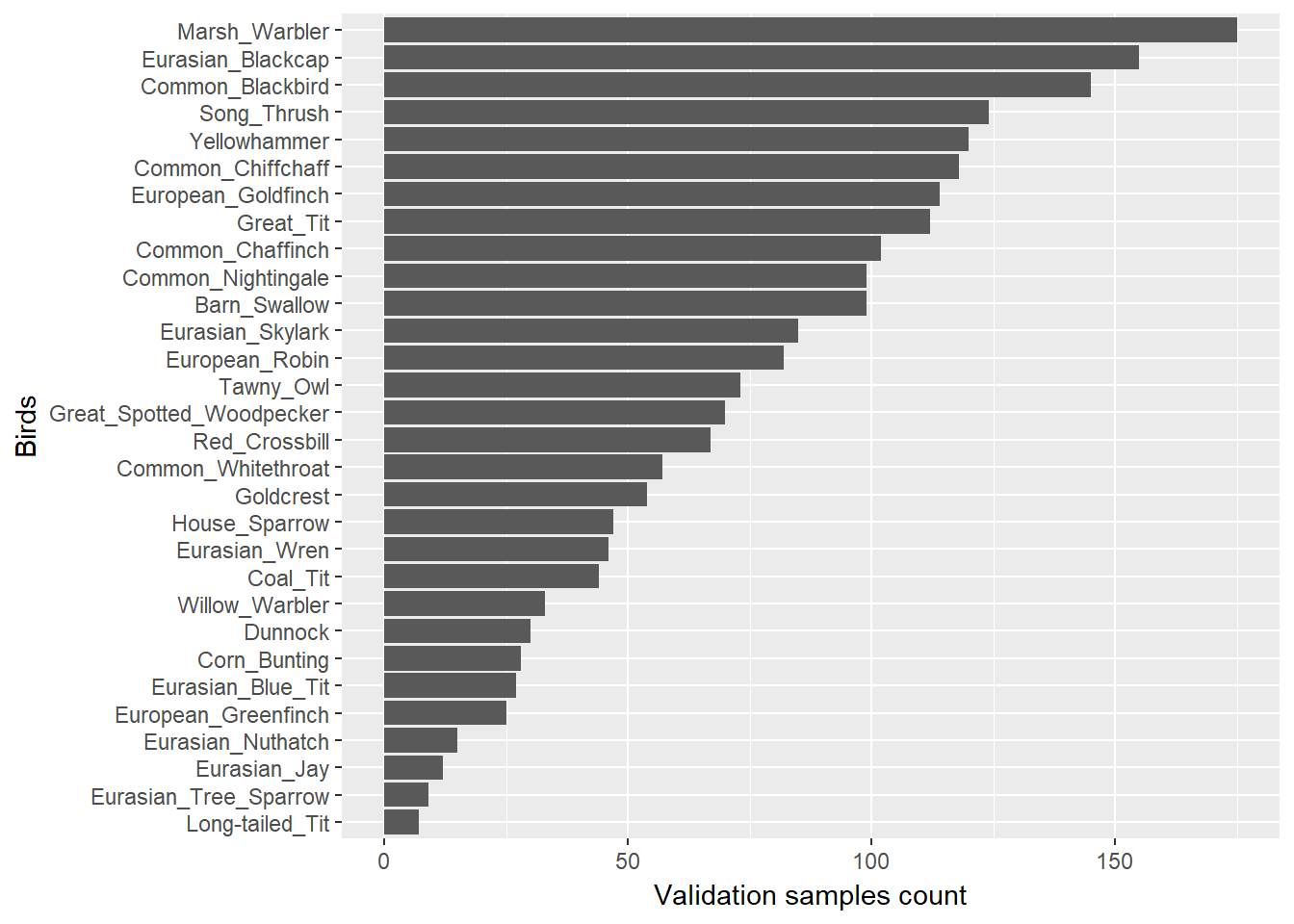

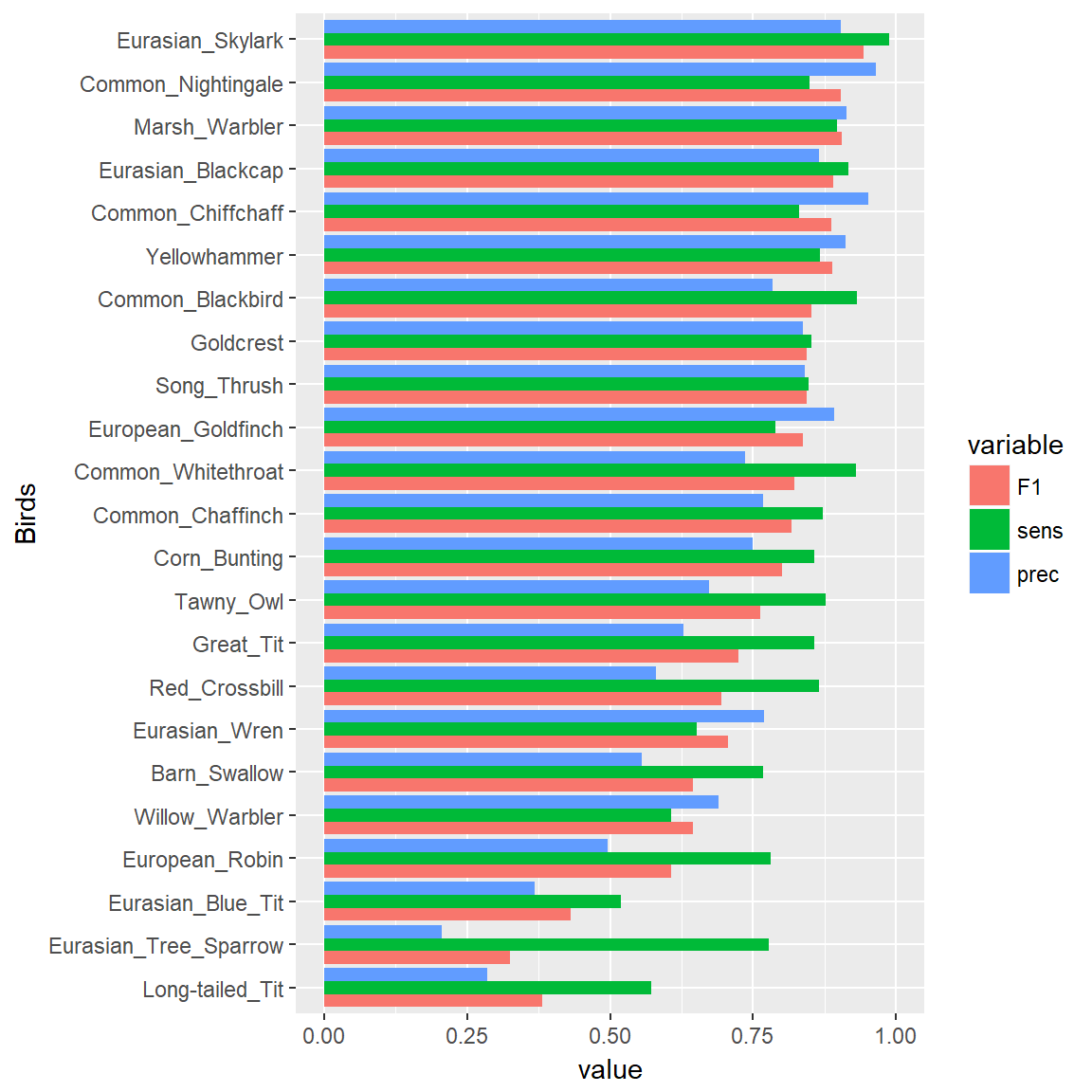

The metrics are calculated on 2174 validation samples.

They are not class balanced, but it is a random subset of whole available data, it reflects the distribution of classes in whole dataset.

Part 1. Impressive results

First of all, some of the birds are classified really well.

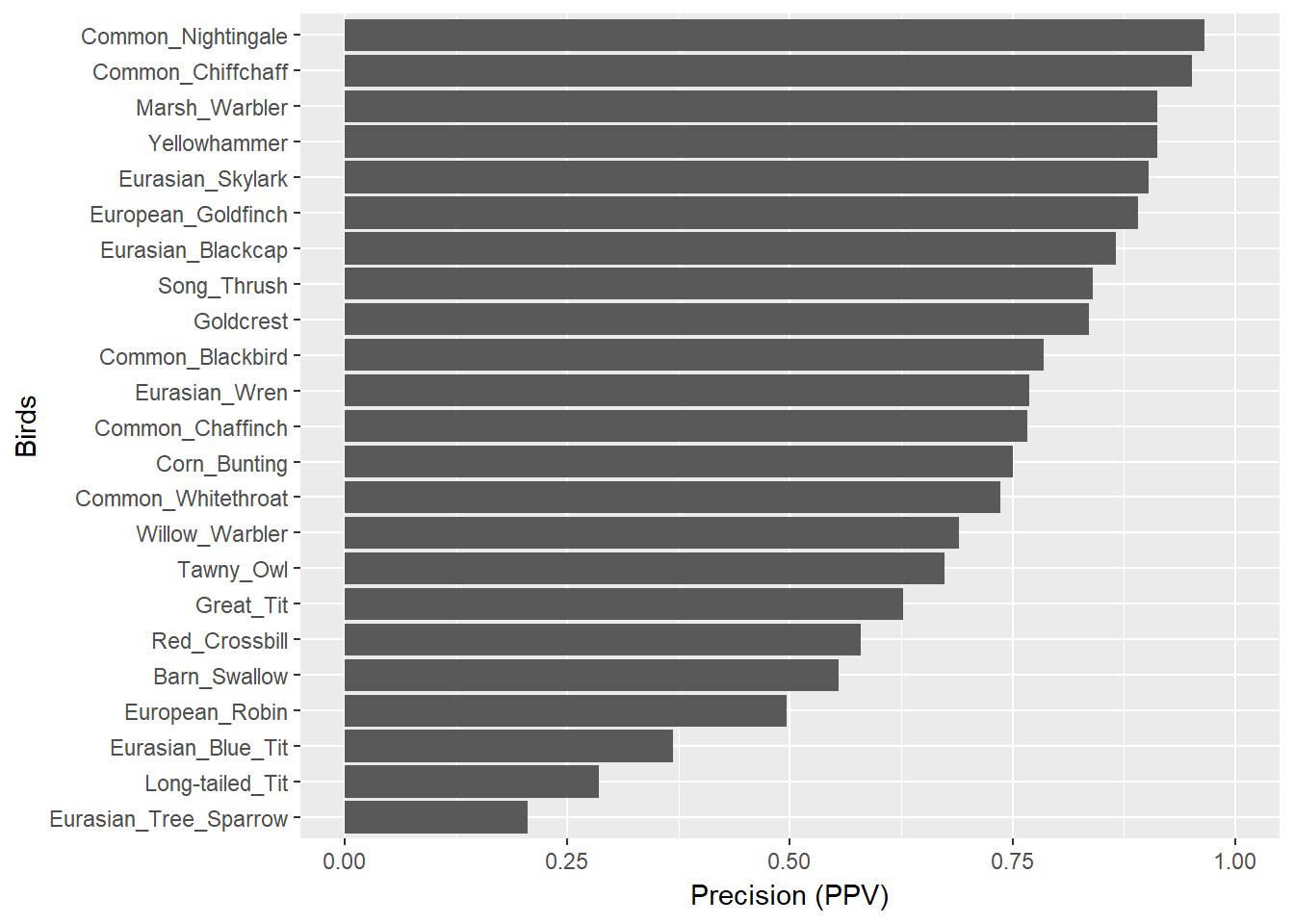

Let’s look at per-bird Precision (Positive Predictive Value) plot, which indicates how many of predicted species are indeed that species.

12 species have the values higher than 0.75.

Among 87 predicted nightingales, 84 were indeed nightingales (the highest PPV=0.9655 among all birds)

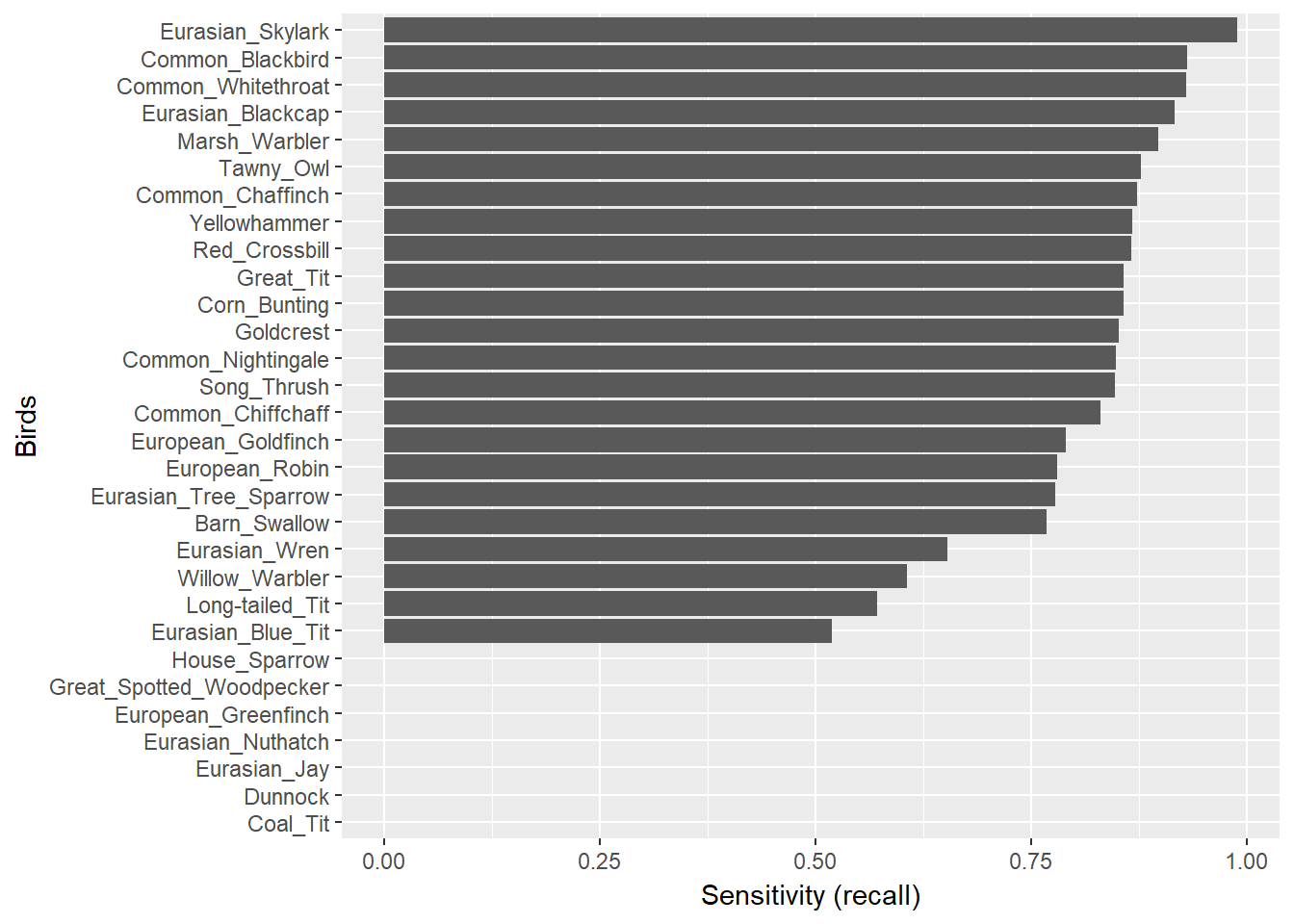

Sensitivity is even more impressive for some species.

The skylark has the highest sensitivity value of 0.988 (84 out of 85 presented skylark songs were detected)!

But here comes the distressing part: the network did not detect 7 of presented species at all!

You may see them having 0 sensitivity value at the lower part of the plot.

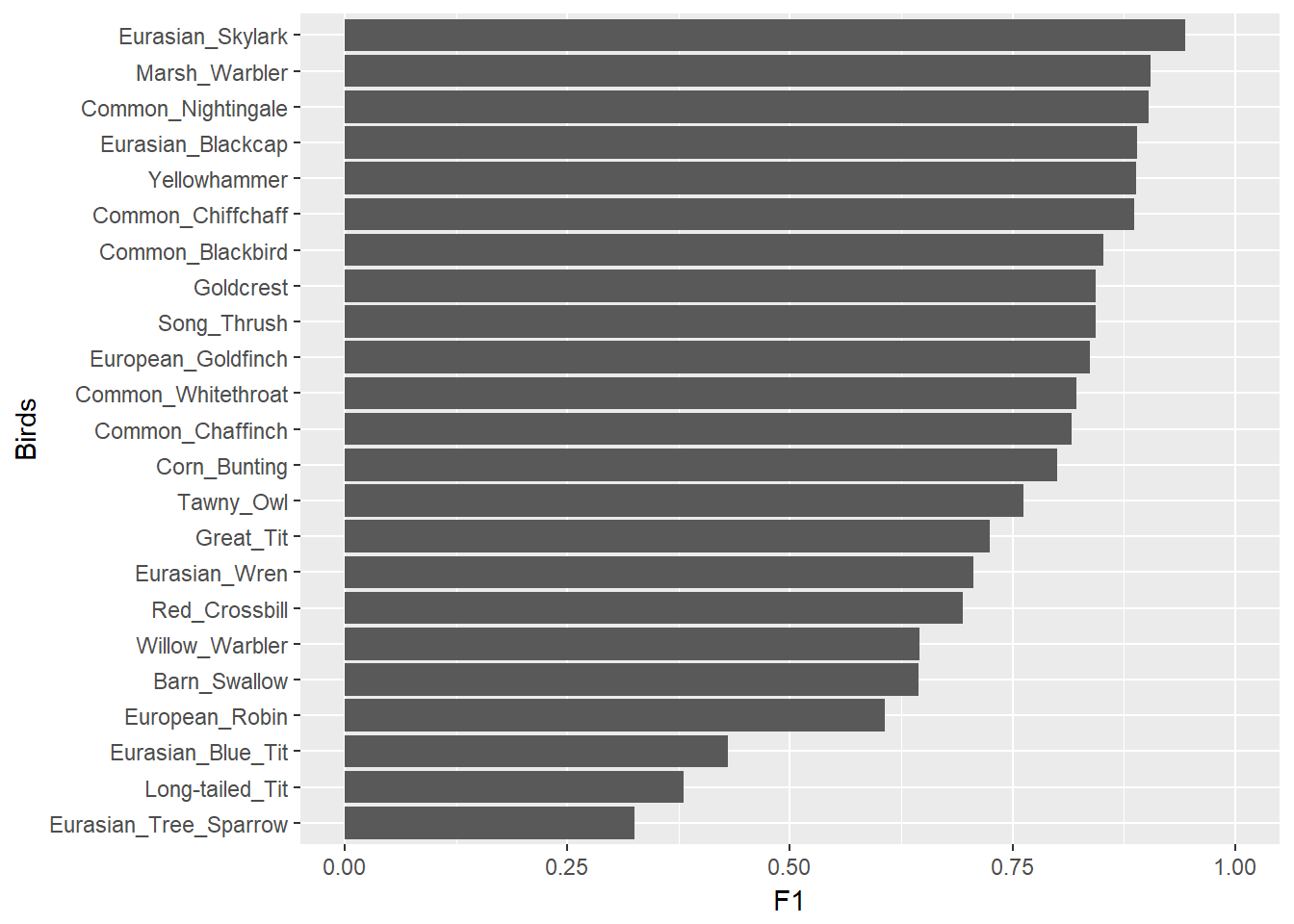

But before proceeding to the discussion about these faults, let’s see the last two plots: F1-scores for different classes and the combined plot for all 3 metrics.

Not bad!

Interesting that sensitivity is almost always higher than precision. Why is it so? Did it happen by chance?

Part 2. Distressing

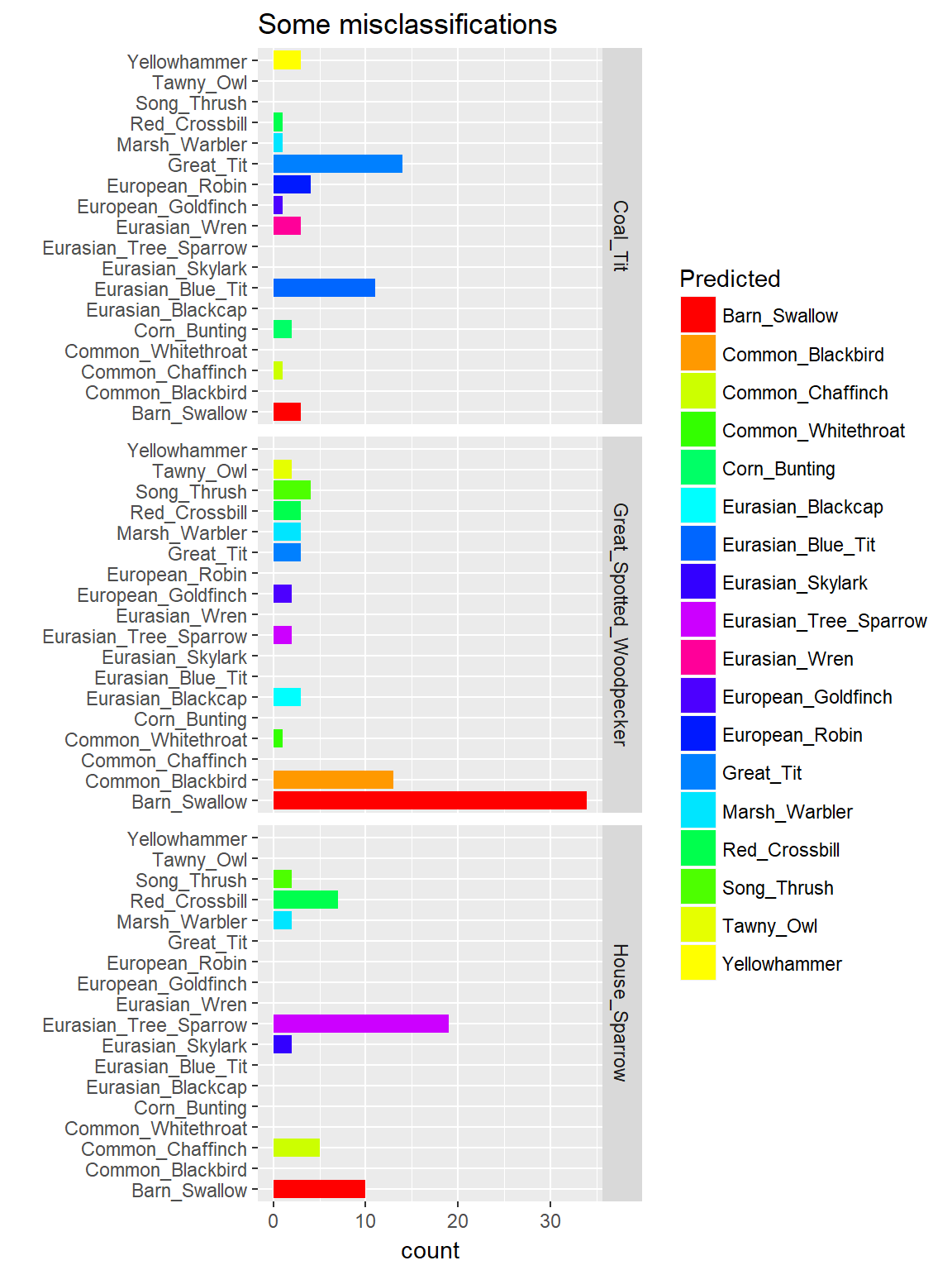

The network did not detect 7 known species in any of 243 presented recordings.

For instance, we can explore the Woodpecker, Coal Tit and House Sparrow. Validation set contained 70 recordings of Great Spotted Woodpecker, 47 of House Sparrow, 44

of Coal Tit. None of them were classified correctly.

How did the network classify them?

The network considered all of the Coal Tit samples as Eurasian Blue Tit or Great Tit. They are all tits so maybe it is normal for the network to be confused.

Similar situation is with the House Sparrow, it is classified as Eurasian Tree Sparrow.

Well, maybe it is too early to train the network to learn spices from the same family.

The situation is different with the woodpecker, it is classified as Swallow. That is really strange.

Consider yourself:

As for me they sound very different, so this is a direction for investigation.

Conclusions

It seems that the network is not ready for distinguishing the species from the same family. Maybe in the next release I’ll leave only one tit and one sparrow.

As for other species, network performs reasonably well, so I’ll try to add some other new species.