As the world moves toward the Internet of Things there are lots of cheap environmental sensors available at the market.

When it just started several years ago I spotted the Toradex company that sells embedded devices. I caught sight of the sensors series called Toradex Oak sensors. The Toradex supplied Microsoft Robotics Studio libraries for them which was right enough for my student project. So I ordered two.

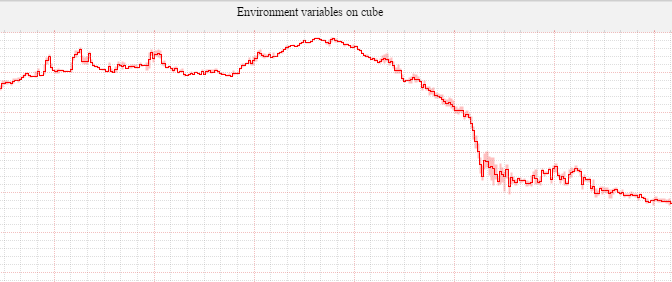

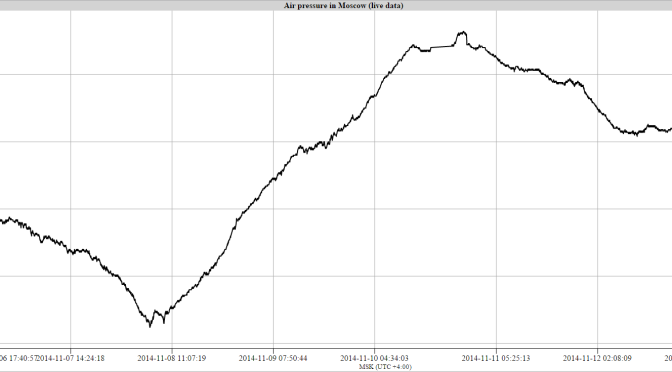

Now I’m building the monitoring system for a summer house based on Raspberry PI. And these sensors made by Toradex suits well for gathering environmental data.

The official site provides a sample of using the sensors on linux. But I have a FreeBSD.

So I started to think about constructing a simple solution to gather the data on BSD.

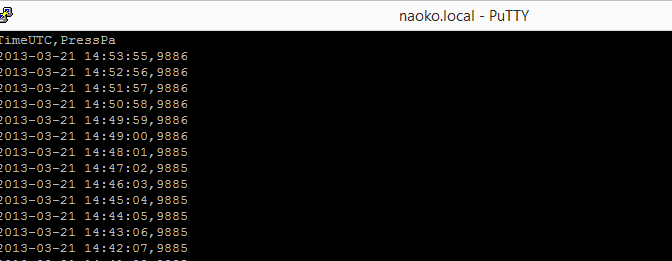

The sensors identify themselves as HID devices. After short investigation I found that FreeBSD provides usbhidctl utility to communicate with HID. That looked promising as it did not require linux emulation. With a single command we are able to fetch all the immediate values from the sensor!

Another task was data storage engine. My colleague Eugene suggested me using collectd or statsd to organize storage. Both of them appeared to be able to store the data and to stream the data to remote host for further storage. I decided to use collecd as it is in C so my Rapberry PI box will have minimal package set.

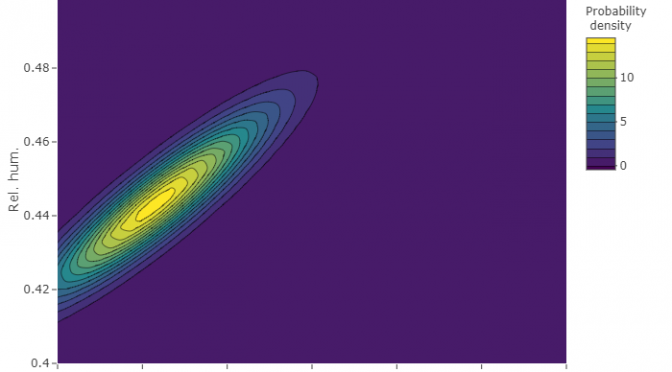

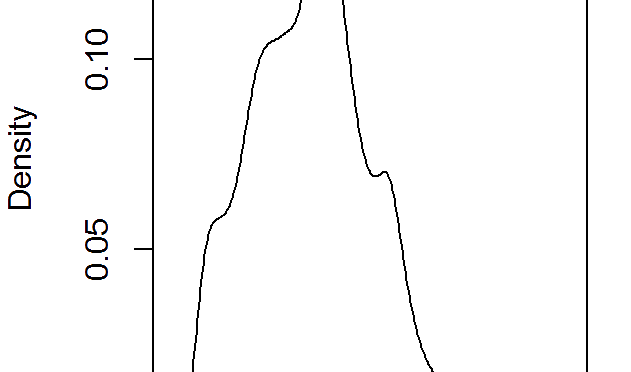

Finally I ended up with the script that is invoked by collectd. The script enumerates HID USB devices, finds Toradex sensors, gets the values from them, applies proper units transformation and returns the data as the string compatible with collectd.

I share it here. So you can download it, modify and extend for your needs.

Open the post to access downloads.