In this post I describe the neural network architecture that I try as a bird song classifier.

Intro

When I first came up with an idea of building a bird song classifier I started to google for the training dataset.

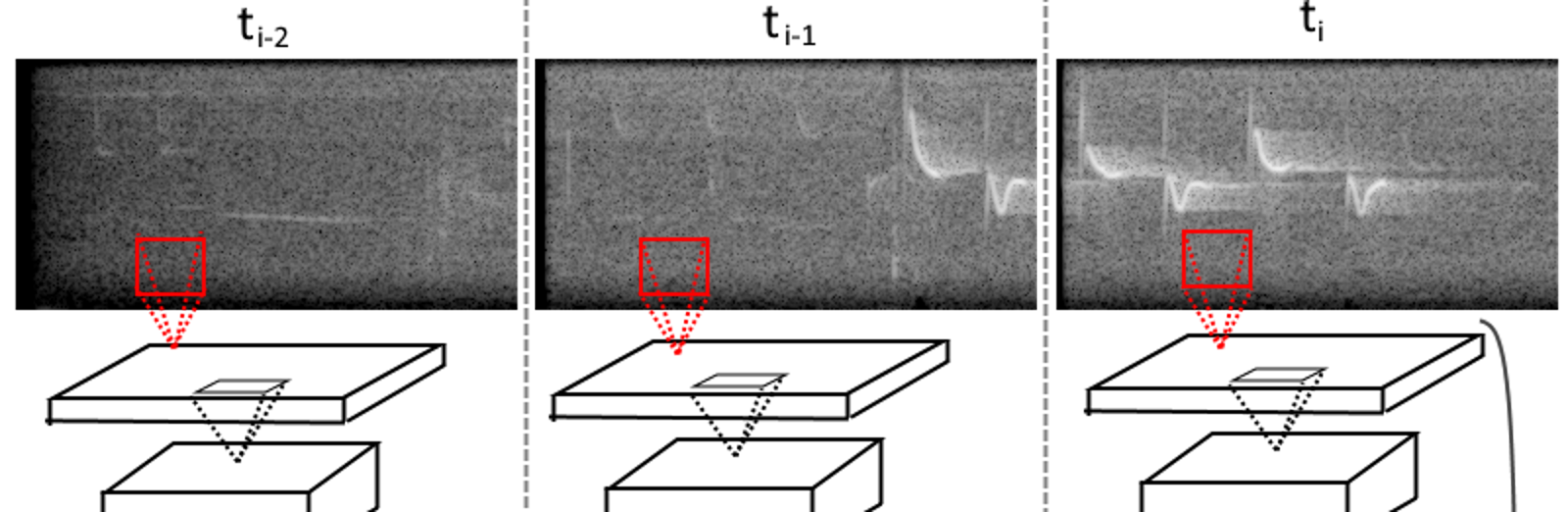

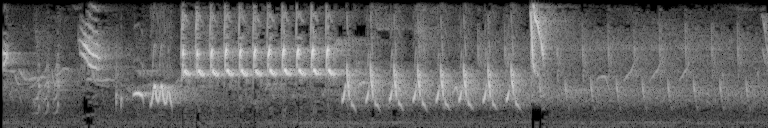

I found xeno-canto.org and the first thing that caught my attention was spectrograms.

(Sepctrogram is visual representation of how spectrum evolves through time. The vertical axis reflects frequency, the horizontal represents time. Bright pixels on the spectrogram indicate that for this particular time there is a signal of this particular frequency)

Well, spectrograms are ideal for visual pattern matching!

Why do I need to analyse sound when we have such expressive visual patterns of songs? That was my thoughts.

I decided to train neural net to classify spectrograms.

Almost immediately I found corresponding paper on the Web: Convolutional Neural Networks for Large-Scale BirdSong Classification in Noisy Environment. That’s perferct! The idea should work if someone had similar idea!

Spectrogram classification peculiarities

The spectrogram classification is rather different from real world image (e.g. photos) classification.

First, the patterns in the spectrogram do not change their scale while the objects in real world photos can be at different distances from the camera resulting in being in different scales.

Second, the image dimensions. In the spectrogram vertical dimension is frequency. And the sound patterns of bird song are usually localised in frequency domain. This means that the patterns usually appear around the same vertical part of the image. We could utilise it. For instance we do not need to care much about equivariance along vertical dimension.

Horizontal dimension is the time. We can utilise it too by applying time specific techniques of classification. For instance LSTM cells perform really well in classifying time evolving data. We can apply LSTM to spectrogram through “scanning” along horizontal dimension, making LSTM consume time frames.

As there are so many differences from classification of real world images, using visual pattern recognition tricks like inception modules are not such useful here.

So I decided to make the network and teach it from scratch.

The network

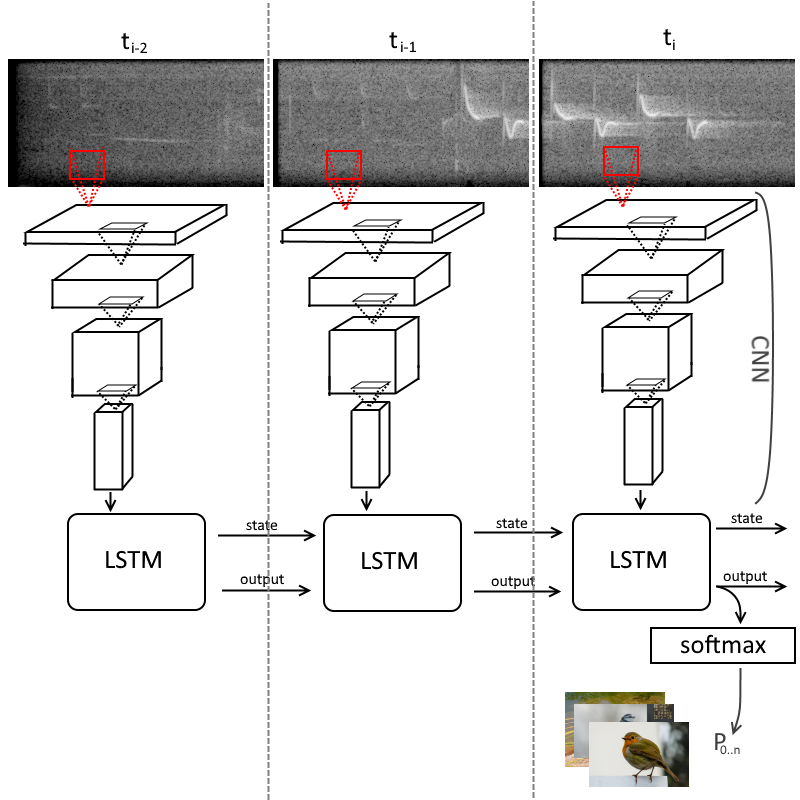

Basically it is Deep Convolutional network with LSTM cell instead of dense layers.

It consumes spectrogram of a recording as an input, splited by one second long intervals. So every image has the same height and width.

The input frame is passed through convolutional layers. This transforms 256×128 image into 16x8x64 data cube (four convolutional layers with 2×2 max pooling reduce the size of the image by two four times, resulting data cube also has 64 channels). Then this data cube is consumed by LSTM cell.

Finnaly the output layer with softmax produces 30 probabilities each of those corresponds to a separate bird.

Training results

For the training set I use 8853 independent recordings that gave 74642 separate training samples (not intersecting, ten seconds long recordings – ten spectrogram frames, one second long each).

For now the model achieved accuracy of 67% on the unfamiliar data. I consider it pretty good, as random guess would give 3.33%.

Right now I have even more promising network architecture in my mind, but first I’ll deploy the current model, so everyone will be able to try infer the bird song with it.